Pytest Coverage and Test Summary in PR Comment

In today’s fast-paced software development environment, Continuous Integration (CI) is crucial for ensuring code quality and streamlining the development process. A well-defined CI workflow can automatically run tests, generate coverage reports, and upload these reports for further analysis. In this blog, we’ll walk you through setting up a CI workflow in GitHub Actions to run Pytest and upload coverage reports.

Why did the QA engineer bring a ladder to work? To reach the high test coverage!

Before diving into the CI workflow, it’s important to understand the benefits of using Pytest and having test coverage reports:

Improved Code Quality: Test coverage reports provide insights into which parts of your codebase are covered by tests and which are not. This helps identify untested parts of the code that may contain bugs.

Increased Confidence: With high test coverage, developers can be more confident that changes to the codebase won’t introduce new bugs. This is especially important in large codebases with multiple contributors.

Simplified Maintenance: Well-tested code is easier to refactor and extend. Test coverage reports ensure that future changes do not break existing functionality.

Better Collaboration: Coverage reports foster a culture of accountability and collaboration among team members, as everyone can see the current state of test coverage and work together to improve it.

This guide delves into setting up an advanced CI workflow using GitHub Actions, tailored specifically for running Python Pytest, uploading coverage reports, and integrating test summaries into GitHub pull requests (PRs). The workflow automates testing processes, enhances code quality through comprehensive coverage metrics, and facilitates collaboration by providing actionable insights directly within the development workflow. Key features include terminating the workflow on xyz service unit test failure or error and monitoring coverage percentage to ensure code quality and reliability. By following this comprehensive guide, teams can streamline CI/CD processes, improve software reliability, and accelerate their development cycles effectively.

There is an existing workflow set up to build and upload images to ECR.

The Pytest workflow runs on the deployed image built during the pull request process.

This guide encompasses two essential workflows designed to streamline CI processes:

1. Reusable Workflow: Run Pytest and Upload Coverage

This workflow is the cornerstone of our CI setup. It orchestrates the testing and coverage reporting process for various services. It is invoked by service-specific workflows and performs the following tasks:

Checks out the codebase using GitHub Actions.

Sets up environment variables required for testing.

Executes tests tailored for each service or module(e.g., abc-service and xyz-service).

Captures and uploads coverage reports and test summaries to GitHub.

Integrates with AWS services and Docker registries as needed.

2. Caller Workflow: Module Services

This workflow triggers the execution of the Reusable Workflow based on specific events like pull requests, pushes to designated branches, new releases, or manual triggers. It ensures that the Reusable Workflow runs with the correct parameters and secrets, facilitating automated testing and validation of changes to the xyz-module/service directory.

By implementing these workflows, development teams can maintain high standards of code quality, automate testing across different service modules, and leverage GitHub Actions to enhance their CI/CD capabilities effectively.

Let’s dive into the detailed YAML configuration of our CI workflow.

The workflow begins with defining its name and the events that trigger it. In this case, we use workflow_call to allow the workflow to be triggered by other workflows.name: Run Pytest and Upload Coverage

on:

workflow_call:

inputs:

service_name:

required: true

type: string

service_path:

required: true

type: string

docker_registry:

required: true

type: string

secrets:

aws_access_key:

required: true

aws_secret_key:

required: true

gcr_token:

required: true

repo_owner:

required: true

gcr_token:

required: true

aws_s3_bucket:

required: true

The workflow contains a single job named Pytest-workflow, which runs on the latest Ubuntu environment. This job has several steps, each performing specific tasks.First, we set up the environment variables and check out the code from the repository.

jobs:

Pytest-workflow:

name: Run Tests and Coverage

runs-on: ubuntu-latest

permissions:

id-token: write

contents: write

pull-requests: write

env:

SERVICE_NAME: ${{inputs.service_name}}

DOCKER_REGISTRY: ${{inputs.docker_registry}}

steps:

- name: Check Out Code

uses: actions/checkout@v2

with:

token: ${{ secrets.gcr_token }}

Next, we assume an AWS role and log in to Amazon ECR to manage Docker images. This is crucial for accessing necessary resources and ensuring secure communication with AWS services.

- name: Assume AWS Role

uses: aws-actions/configure-aws-credentials@v1

with:

role-to-assume: arn:aws:iam::********:role/github-action-aws-access

role-duration-seconds: 7000

aws-region: us-east-2

- name: Login to Amazon ECR

id: login-ecr

uses: aws-actions/amazon-ecr-login@v2

We then set environment variables and run tests for different services , generating coverage reports for each. This step ensures that all changes are properly tested and coverage metrics are updated accordingly.

This step is designed to set up the necessary environment variables, prepare the environment, and execute the tests for the xyz-service within a Continuous Integration (CI) workflow. Here’s a detailed breakdown of each part of this step:- name: Set Environment Variables and Run Test

if: ${{(github.event_name == 'pull_request') && (env.SERVICE_NAME == 'xyz-service' )}}

id: xyz-coverage

env:

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

name: Describes the purpose of the step.

if: This condition ensures the step is executed only if the GitHub event triggering the workflow is a pull request and theSERVICE_NAMEenvironment variable is set toxyz-service.

id: Provides a unique identifier for this step, useful for referencing the step’s outputs in later steps.

env: Sets the GITHUB_TOKEN environment variable, using a secret stored in GitHub Actions secrets.run: |

cd deployment/tests/

echo "" > .env

SHORT_COMMIT_SHA=`echo ${GITHUB_SHA} | cut -c1-8`

branch_name=${GITHUB_REF##*/}

component_name=${SERVICE_NAME}

S3_bucket=${{ secrets.aws_s3_bucket }}

S3_path="s3://${S3_bucket}/${branch_name}/${component_name}"

build_version=$(aws s3 cp --quiet ${S3_path}/${component_name}.version - | awk -F';' '{print $2}')

echo "xyz_DOCKER_IMAGE_NAME=$DOCKER_REGISTRY/$component_name:${branch_name}-${SHORT_COMMIT_SHA}" >> .env

echo "IS_TESTING:true" >> .env

docker-compose -f docker-compose.xyz-test.yml up -d

docker exec -u root tests_xyz-service_1 python3 -m pytest api/tests --durations=10 -n 4 --cov --cov-report xml:/usr/src/app/coverage.xml --junitxml=pytest.xml || true

docker cp tests_xyz-service_1:/usr/src/app/coverage.xml xyz-coverage.xml

docker cp tests_xyz-service_1:/usr/src/app/pytest.xml xyz-pytest.xml

echo "coverage=true" >> $GITHUB_OUTPUT

Step-by-Step Breakdown:

Change Directory

cd deployment/tests/

Changes the current working directory to deployment/tests/, where the docker file located.2. Initialize .env File

echo "" > .env

Initializes an empty .env file.3. Set Short Commit SHA

SHORT_COMMIT_SHA=`echo ${GITHUB_SHA} | cut -c1-8`

Extracts the first 8 characters of the commit SHA to use as a short identifier for the build.

4. Extract Branch Name

branch_name=${GITHUB_REF##*/}

Extracts the branch name from the GitHub reference.

5. Set Component Name

component_name=${SERVICE_NAME}

Sets the component name to the value of the SERVICE_NAME environment variable.6. Set S3 Bucket and Path

S3_bucket=${{ secrets.aws_s3_bucket }} S3_path="s3://${S3_bucket}/${branch_name}/${component_name}"

Sets the S3 bucket and path using a secret stored in GitHub Actions secrets.

7. Fetch Build Version

build_version=$(aws s3 cp --quiet ${S3_path}/${component_name}.version - | awk -F';' '{print $2}')

In our case, we are storing build version in s3 bucket so its fetches the build version from an S3 file.

8. Set Environment Variables

echo "xyz_DOCKER_IMAGE_NAME=$DOCKER_REGISTRY/$component_name:${branch_name}-${SHORT_COMMIT_SHA}" >> .env echo "IS_TESTING:true" >> .env

Sets environment variables for the Docker image name and a flag indicating testing mode.

9. Start Docker Containers

docker-compose -f docker-compose.xyz-test.yml up -d

Uses Docker Compose to start the necessary Docker containers defined in the docker-compose.xyz-test.yml file.10. Run Tests in Docker Container

docker exec -u root tests_xyz-service_1 python3 -m pytest api/tests --durations=10 -n 4 --cov --cov-report xml:/usr/src/app/coverage.xml --junitxml=pytest.xml || true

Executes the tests inside the tests_xyz-service_1 Docker container using Pytest.--durations=10: Shows the 10 slowest test durations.-n 4: Runs tests in parallel using 4 processes.--cov --cov-report xml:/usr/src/app/coverage.xml: Generates a coverage report in XML format.--junitxml=pytest.xml: Generates a JUnit XML report.11. Copy Coverage Report

docker cp tests_xyz-service_1:/usr/src/app/coverage.xml xyz-coverage.xml docker cp tests_xyz-service_1:/usr/src/app/pytest.xml xyz-pytest.xml

Copies the coverage report and the Pytest report from the Docker container to the host machine.

12. Set Coverage Output

echo "coverage=true" >> $GITHUB_OUTPUT

Sets thecoverageoutput totrue, indicating that coverage data is available for further steps in the workflow.

This step ensures that the environment is correctly set up, the necessary tests are executed, and the resulting coverage reports are available for analysis and reporting.

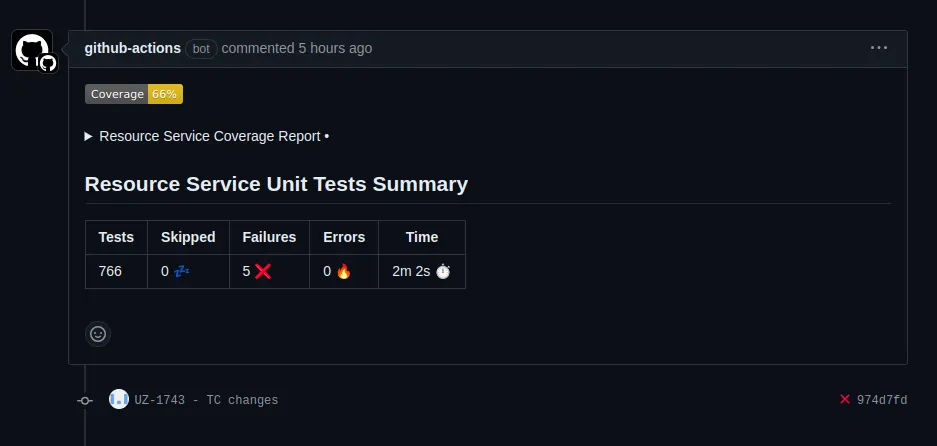

We use MishaKav/pytest-coverage-comment to add comments with the coverage reports and upload the coverage XML files as artifacts. This step ensures that the coverage data is easily accessible and can be reviewed directly within the GitHub interface.- name: Pytest coverage comment for xyz service

if: steps.xyz-coverage.outputs.coverage == 'true'

id: xyz-coverageComment

uses: MishaKav/pytest-coverage-comment@main

with:

pytest-xml-coverage-path: deployment/tests/xyz-coverage.xml

title: Xyz Service Coverage Report

create-new-comment: true

junitxml-path: deployment/tests/xyz-pytest.xml

junitxml-title: Xyz Service Unit Tests Summary

report-only-changed-files: true

- name: Upload Xyz Coverage Report

if: steps.xyz-coverage.outputs.coverage == 'true'

uses: actions/upload-artifact@v2

with:

name: xyz-coverage.xml

path: deployment/tests/xyz-coverage.xml

Finally, we handle failing tests by surfacing errors and upload the test summery in GitHub action summery section and terminating the workflow if necessary. We also check the coverage percentage and fail the workflow if it falls below a specified threshold.

- name: Surface failing xyz tests

if: steps.xyz-coverage.outputs.coverage == 'true'

uses: pmeier/pytest-results-action@main

with:

path: deployment/tests/xyz-pytest.xml

summary: true

display-options: fEX

fail-on-empty: true

title: Test results

- name: terminate workflow on xyz service unit test on failure or error

if: env.SERVICE_NAME == 'xyz-service'

run: |

failures=${{ steps.xyz-coverageComment.outputs.failures }}

errors=${{ steps.xyz-coverageComment.outputs.errors }}

if [ "$failures" -ne 0 ] || [ "$errors" -ne 0 ]; then

echo "Pytest failures or errors detected. Exiting workflow."

exit 1

This GitHub Actions workflow triggers the reusable Pytest and Coverage workflow for the Xyz Module Service. It specifies the conditions under which the workflow should execute, including pull requests, pushes to specific branches, releases, and manual triggers. This setup ensures comprehensive testing and coverage validation for changes made to the xyz module.

on:

pull_request:

types:

- opened

branches:

- master

paths:

- 'xyz-module/service/**'

push:

branches:

- develop # Development Environment

paths:

- 'xyz-module/service/**'

release:

types: [created]

workflow_dispatch:

inputs:

service_name:

description: 'Name of the service to run tests for (abc-service or xyz-service)'

required: true

default: 'xyz-service'

service_path:

description: 'Path to the service within the repository'

required: true

default: 'xyz-module/service'

docker_registry:

description: 'Docker registry URL'

required: true

default: 'ghcr.io'

needs: [Build]

if: github.event_name == 'pull_request' && (inputs.service_name == 'xyz-service' )

uses: ./.github/workflows/reusable-pytest-xyz-service.yml

with:

SERVICE_NAME: ${{inputs.service_name}}

service_path: ${{inputs.service_path}}

docker_registry: ${{inputs.docker_registry}}

secrets:

aws_access_key: ${{ secrets.AWS_ACCESS_KEY }}

aws_secret_key: ${{ secrets.AWS_SECRET_KEY }}

gcr_token: ${{ secrets.GCR_TOKEN }}

repo_owner: ${{ secrets.REPO_OWNER }}

gcr_token: ${{ secrets.GCR_TOKEN }}

aws_s3_bucket: ${{ secrets.AWS_S3_BUCKET }}

Pull Request: Triggered on pull requests being opened to specific branches (develop) affecting files in thexyz-module/servicedirectory.

Push: Triggered on pushes to branches (develop) affecting files in thexyz-module/servicedirectory.

Release: Triggered on the creation of new releases.

Manual: Triggered manually using the GitHub Actions UI or API.

Inputs: Accepts inputs forservice_name(abc-service or xyz-service),service_path(path to the service within the repository), anddocker_registry(Docker registry URL).

Conditions: Executes the workflow only for pull requests where theservice_nameisxyz-service.

Uses: Calls the reusable workflow defined in reusable-pytest-xyz-service.yml with the specified inputs and secrets.This workflow orchestrates the execution of the comprehensive testing and coverage workflow (reusable-pytest-xyz-service.yml) specifically tailored for changes to the Xyz Module Service, ensuring rigorous validation of code changes across different stages and environments.By setting up this comprehensive CI workflow, you can ensure that your code is consistently tested, coverage reports are generated and analyzed, and any issues are addressed promptly. This not only improves code quality but also streamlines the development process, allowing you to focus on building great software.

Feel free to modify this workflow according to your specific requirements and integrate it into your GitHub repository to enhance your CI/CD pipeline. Happy coding!