The GenAI Software Stack Series (Part 1 of 2)

Intro

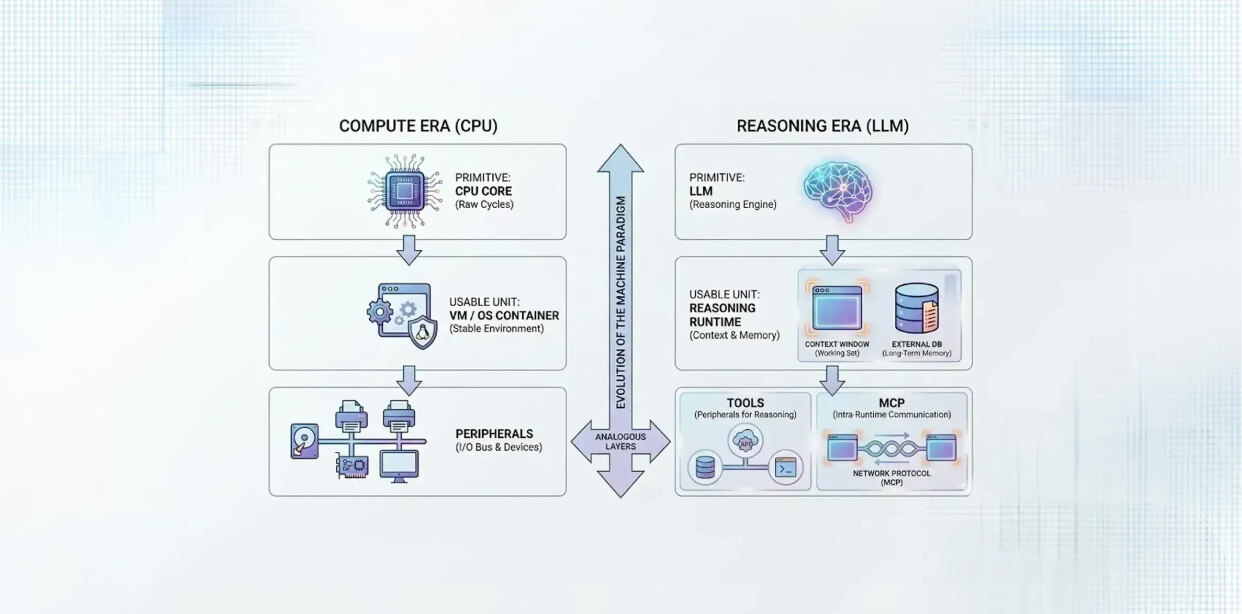

This is Part 1 of a two-part series. I originally set out, after my previous blog, to write about the "GenAI software stack," i.e., what the equivalents of compilers, frameworks, and SDLC layers might look like in a world where reasoning is now callable. But when I started sketching that stack, I kept running into the same confusion: what is the basic unit that actually ships reasoning in a usable way?

So I’m stepping one layer down first. Part 1 defines that serving unit: what I’m calling the Reasoning Runtime. It is an analogue to a PC/VM with an OS. For Software 1.0. Part 2 will come back to the higher-layer GenAI stack once this foundation is clearer. This remains an opinion, so take it with a grain of salt.

The historical pattern we keep repeating

In the CPU era, the 0→1 shift wasn’t "a programming language" or "a framework". It was automating computation cycles on a CPU. But nobody shipped compute cycles directly. What shipped was a usable unit: a full machine, CPU plus memory, storage, and I/O, wrapped in an operating system that made it behave consistently.

That OS layer mattered because it created stable contracts over messy reality. Underneath were caches, RAM, disk, buses, devices, interrupts, and endless quirks. Above it is where developers got a predictable target. That predictability is what let the software ecosystem explode: compilers, libraries, frameworks, and finally applications for end-users.

If we accept that pattern of primitive → usable unit → stack above then the question in the era of GenAI becomes obvious: what is the equivalent usable unit for reasoning?

The GenAI Reasoning Runtime (and why naming it helps)

In general, a runtime is the execution environment that makes a workload runnable and operable. It defines how something is invoked, what resources it relies on, and what behaviors you can depend on.

In GenAI terms, the Reasoning Runtime is the environment that makes reasoning callable in a repeatable way: you submit messages/prompts (increasingly multimodal) and receive tokens or structured outputs under a set of contracts you can build against. The model matters, but the runtime is what turns a model into an abstraction you can reliably build software over.

And it’s worth saying this plainly: nothing in the market fully matches this “runtime-as-OS” view yet. Products like ChatGPT and Claude are powerful but intentionally appliance-like and closed; on-prem serving stacks expose more control but don’t unify the full contract layer either. Today, the runtime exists as pieces spread across managed platforms and on-prem engines; converging, but not yet unified.

Once you see the runtime as the serving unit, two things immediately follow: you need a notion of working set vs longer-term memory, and you need a clean way for reasoning to interact with the outside world.

Working set, external memory, and the boundary of abstraction

Reasoning has a bounded working set: the context window. I’m intentionally not mapping this to RAM management because the strategies here are application-owned. But the constraint is still real, and it shapes everything above it.

And the moment you accept a bounded working set, you get the need for external memory. Today people default to “vector DB,” but that’s just one option. Graph stores, search indexes, files, relational stores, and other indexing strategies can all play the long-term memory role. The important separation is simple: the runtime gives you the callable reasoning loop and the boundaries; the application decides what to retrieve, what to load, and how to keep it coherent.

But memory alone doesn’t make reasoning useful. Compute became economically useful when it could interact with the world through I/O. Reasoning is heading the same way, through tools.

Tools as I/O — and why MCP feels more like TCP than PCI

Peripherals made CPU compute useful: network, disk, displays, printers. Tools play that role for reasoning: databases, internal APIs, workflows, code execution, enterprise systems. This is where the OS analogy is strongest at a gut level: reasoning without I/O is impressive; reasoning with I/O becomes operational.

Now here’s the nuance: tool usage is not abstracted like OS device drivers, at least not yet. It’s visible, app-designed, and still somewhat bespoke. That’s not a flaw in the analogy; it’s a maturity signal.

MCP fits this story better as a TCP-style protocol than as a 'device bus'. It is a standardized way for one system to talk to another system and exchange structured context and capabilities, and the far end of that connection is often not a dumb tool at all, it can be a full service with its own execution loop (even its own reasoning runtime) that orchestrates work, invokes downstream tools, and returns results. In other words, MCP doesn’t just plug tools into a model; it makes tools and reasoning-enabled services addressable endpoints you can reliably connect to, which is much closer to how modern networked systems scale in practice. (I know I am going against the MCP = USB port analogy, but that doesn't fit my thought process).

Why this matters (and what Part 2 will build on)

The OS effect wasn’t just usability. It was the creation of a stable target that made software production predictable and ecosystems inevitable.

GenAI is early, but you can see the same convergence beginning: runtimes are forming (on-prem and managed), contracts are stabilizing, and protocols like MCP are giving us the beginnings of standard connectivity between reasoning-enabled services.

Once you see the Reasoning Runtime as the serving unit I want to take this unit as the baseline and sketch what the Software 3.0 stack looks like above it. So what do the equivalents of libraries, frameworks, SDLC patterns, and production discipline should be when the core resource is callable reasoning.

In Part 2, I will be drawing layers and not a potpurri of tools: what a coherent GenAI stack actually looks like when it’s built on top of a runtime we have identified here.